Let’s cut right to the chase: you're reading this blog because you want expertise on storing data for your current data-driven business needs. Well, you've come to the right place!

Throughout this post, we'll look at the definitions and sample use cases for data vaults, warehouses, lakes and hubs. The differences between them are subtle, but they all serve a different purpose in the data world today.

Download the article as a pdf

Share it with colleagues. Print it as a booklet. Read it on the plane.

What is a Data Vault?

Data Vault Definition

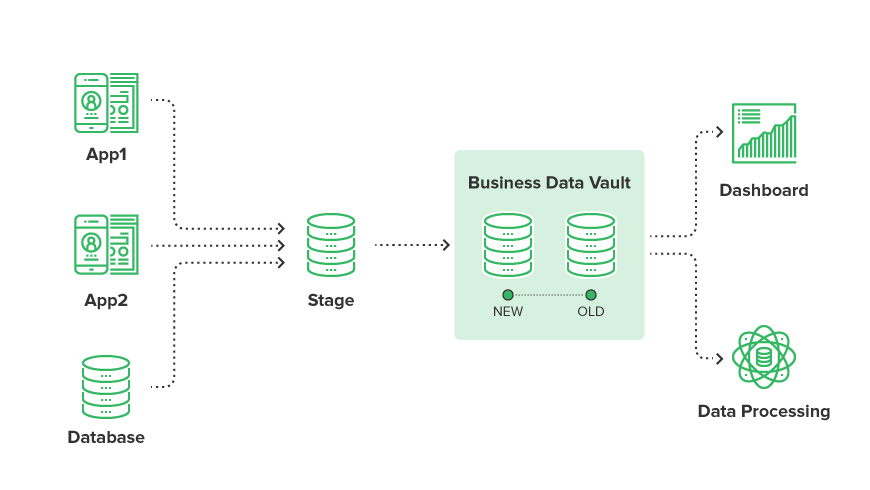

A data vault is a system made up of a model,

Data Vault Use Case

The biggest data vault use case is when a business, such a bank, needs to audit their data.

Let’s say you decide you need to update your security model to include additional fields and new applications in your enterprise. Using a data vault, you are able to checkpoint the time you made the security model changes and update your infrastructure with the changes, including all associated applications. This means the business team continues receiving the full view of historical and current information regarding the audit trail.

What is a Data Warehouse?

Data Warehouse Definition

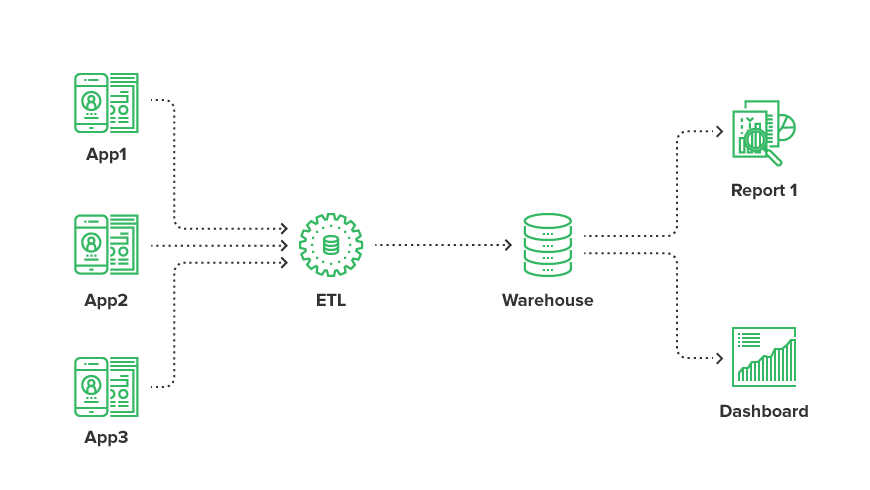

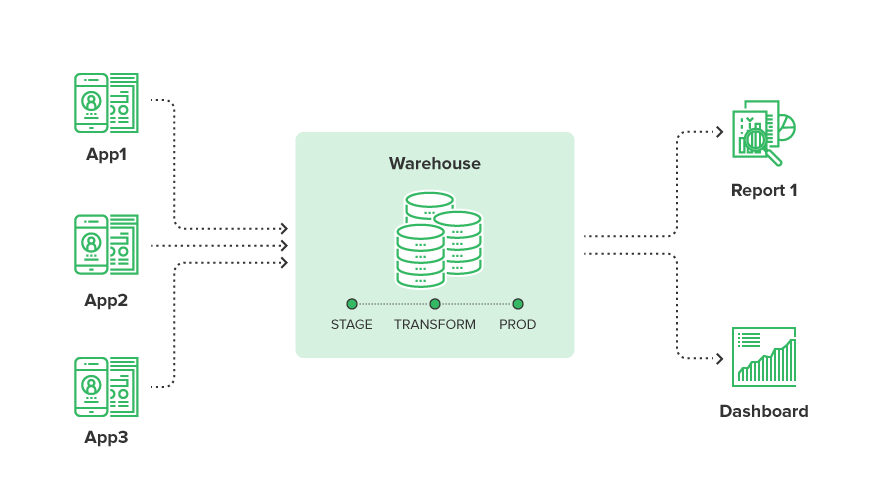

A data warehouse is a consolidated, structured repository for storing data assets. Data warehouses will store data in one of two ways: Star Schema or 3NF, but these are only fundamental principles in how you store your data model. We have seen, advised, and implemented both principles, but the one major flaw is that everything must be strictly defined (both in

Data Warehouse Use Case

The most common use case for creating and using a data warehouse is to consolidate data and answer a business-related question. This question may be, 'How many users are visiting my product pages from North America?' This ties together the information you're receiving from your end users with a business question that needs to be answered from a structured data set. This is what most would identify as the cookie cutter business intelligence solution.

Read more: Data Warehousing with CloverDX

But, there is an alternative approach that is becoming more popular, especially when you are talking about cloud and more powerful warehouses.

Organizations are adopting the ELT approach. This entails “staging” their data in their warehouse (such as HP Vertica), and then letting the power of the database perform the traditional transformation. Essentially, you are performing the most expensive operations with a system where you have more resources.

How to get your data to your cloud data warehouse? There are several options. This clip is from our webinar on Data Ingestion into S3, Azure Blob, Redshift, Snowflake: What Are Your Options?

What is a Data Lake?

Data Lake Definition

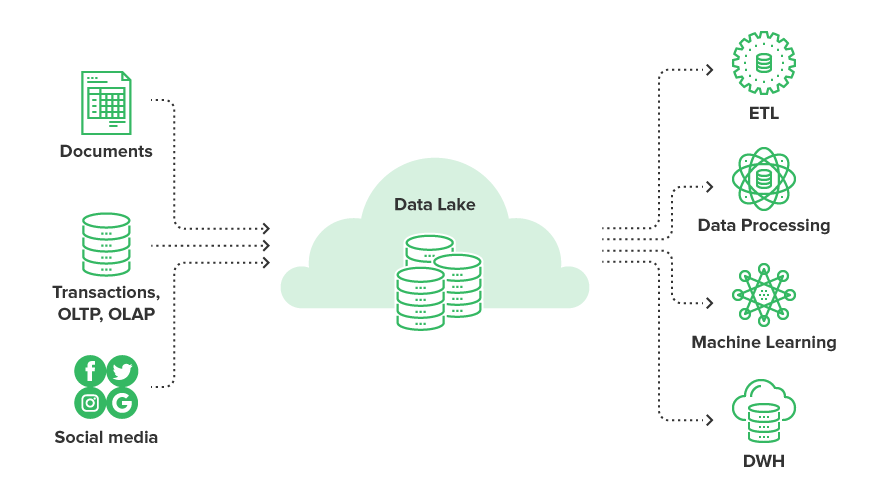

A data lake is a term that represents a methodology of storing raw data in a single repository. The type of data

But the challenge begins when you want to try to make sense of your data. If you are dumping data into a data lake, how do you know what data you need and what data you don’t need? How do you determine where the data resides in the lake? This very quickly can become a data swamp if not managed correctly.

Data Lake Use Case

The use cases we see for creating a data lake revolve around reporting, visualization, analytics, and machine learning.

Here is the architecture we see evolving:

What is a Data Hub?

Data Hub Definition

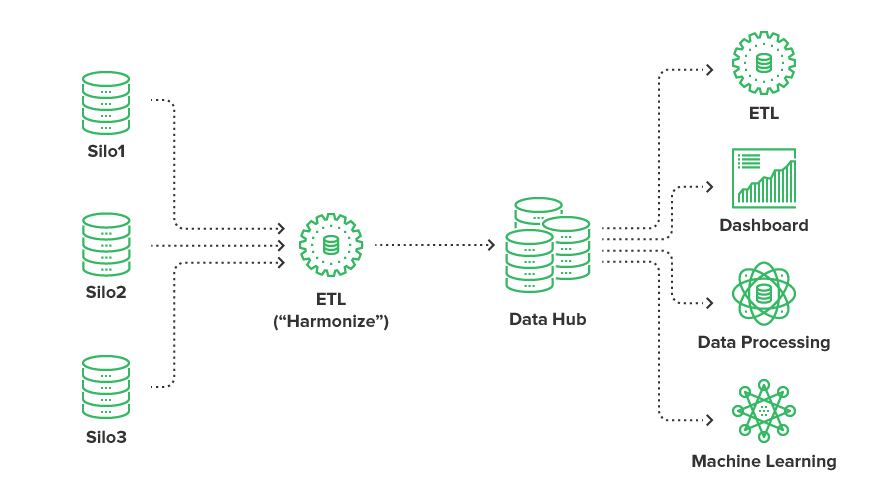

A data hub is a centralized system where data is stored, defined, and served from. We like to think of it as a hybrid of a data lake and a database warehouse, as it provides a central repository for your applications to dump data. It also adds a level of harmonization at ingest so the data is indexed and can easily be queried.

Please note that this is not the same as a data warehouse architecture, as the ETL processing is merely for indexing the data you have rather than mapping it into a strict structure. The challenge comes when you have to implement the data hub and how can you harmonize all of your siloed data sources.

Data Hub Use Case

In general, we see the same use cases for a data hub as we would for a data lake: reporting, visualization, analytics, and machine learning.

Conclusion

Hopefully, you have learned a little bit about each of these data models, as well as their individual values in dealing with multi-structured data.

At the end of the day, there is

This means that you must analyze your requirements, needs, and budget before deciding which approach to use. Technology is constantly evolving, and each of these models will evolve with it.

Discover more: Data Architecture