What is Data Quality?

Data quality is a measure of data’s fitness for purpose. It covers many different quantitative and qualitative properties including:

- Completeness

- Internal consistency

- Accuracy

Data quality processes are designed to ensure that the data remains useful and that any problematic data is discovered as early as possible and then manually or automatically corrected.

Why Is Data Quality Important?

Most organizations produce and consume vast quantities of data. Many decisions, ranging from the mundane to the strategic, are based on data from several sources. As such, high data quality is essential for the efficient function of the organization.

Even when the importance of data quality is recognized, issues often go unnoticed or remain uncorrected for prolonged periods of time. This often stems from the fact that humans are good at dealing with low-quality data (a postman can usually deliver to a poorly written address).

Automated systems, on the other hand, often cope badly with poor quality data and this often leads to increased manual labor or sub-optimal decisions that have negative financial consequences.

Before your data enters the ETL process, it's in your best interest to only work with "good" data – that is, data that conforms to its respective domain rules, ranges, allowed values, and perhaps other restrictions. If it doesn't, you'll want to log and remove all incorrect records so as not to pollute your transformation, as well as so you can report on and fix the data later.

Read more: Building data pipelines to handle bad data: How to ensure data quality

How To Measure Data Quality

Measuring Data Quality

To evaluate data quality, it is helpful to define a set of measures that provide a common assessment framework.

These measures provide information about some measurable aspect of the data in question. There are six standard measures for data quality:

- Completeness

- Accuracy

- Consistency

- Validity

- Integrity

- Timeliness

1. Completeness

Completeness is a measure of whether all expected data is actually present in a given data set. Data completeness can be measured for whole records as well as for attributes.

For example, the data set of all company employees is complete on a record level when it contains a record for every employee.

On an attribute level, the situation is more complex since quite a lot of data might be available for each employee (name, role, address, email, phone number, etc.) and some of these attributes might be mandatory (name, address) while others are only optional (phone number, personal email).

2. Accuracy

Accuracy describes the degree to which given data correctly describes the real world object.

An employee data set, for example, might contain inaccurate data if employees do not report their address changes or if spelling or other errors are introduced during data entry.

Accuracy can be measured as percentage of entity attributes that match the properties of the real world object. This is of course often very hard to do since determining whether an object attribute is accurate can be difficult or impossible.

3. Consistency

Consistency is a measure of the synchronicity of different systems storing information about the same object.

Employee data, for example, might be stored in an HR system and a project management system. Most of the data is system specific but some data points will be common and they can easily become unsynchronized resulting in one system having lower quality data.

Improving the consistency of your data across your organization can even help fight a silo mentality and improve how a business works together.

4. Validity (Conformity)

Validity is the measure of how well data meets various requirements placed on attribute values such as value size, allowed values for enumerations, data format for systems that allow free form data (e.g. whether dates are stored as MM/dd/yyyy or dd/MM/yyyy in text files).

There are, of course, more sophisticated validations that implement complex, cross-field or cross-system validations.

Data often becomes invalid due to programming errors or data entry errors. It is often possible, as well as desirable, to design automated systems that can flag or even correct invalid records.

5. Integrity

Integrity is the measure of the validity of relationships between different data entities. This concept is often applied to database applications where various integrity constraints can be enforced directly by the database (foreign keys, unique constraints etc.).

You will also encounter relationships between data stored in non-relational data stores, in which case the integrity constraints must be enforced by the application logic.

Integrity is most commonly broken in cases where common data attributes are stored in multiple systems.

6. Timeliness

Timeliness reflects the degree to which data represents reality at a specific point in time. It can be thought of as the difference between something happening and that change being recorded in the system and propagated to users.

Blog: 6 Data Quality Metrics You Can't Afford To Ignore

4 Data Quality Pitfalls

1. Unrealistic Data Entry Expectations

Many data quality issues are created at the point that data is entered into computer. Even though the degree of automation in many organizations can be high, there is always a considerable amount of data that is manually entered.

Manual data entry inevitably brings with it mistakes whether incorrect spelling or entering data into the wrong field. Some mistakes can be caused by lack of training or a poor user interface and some applications perform little or no validation.

Case study: How a self-learning address validation solution repairs 90% of addresses instantly without the need for human intervention.

2. Changing Business Processes

Data quality issues are often caused by business process changes that are incorrectly communicated. It could happen that a new attribute is added to an entity and not all systems that work with that entity are properly updated resulting in lower quality data.

If invalid data has propagated to other systems, correcting the data at that point can be problematic and costly, especially if it impacts multiple systems. It is, therefore, much easier and less costly to properly enforce validation rules in the source application.

It is important to always consider the whole application landscape even when implementing changes that might seem trivial (like adding an attribute to entity).

3. Poor Data Organization

Today’s world is powered by data. What was considered a huge volume of data just a few years ago is now considered normal and with storage getting cheaper, many organizations now tend to store data indefinitely.

While keeping data organized might seem to be a simple task, it is actually a major challenge in larger businesses. In many cases, no single party or department is formally assigned responsibility, which often leads to each department doing their own thing.

A seemingly trivial but typical example is the naming of documents. If there’s no standard for document naming and storage, improvised systems (wikis, portals etc.) and conventions arise, making it hard to locate documents and resulting in growing inefficiencies. Staff turnover issues compound this still further.

Learn more: Align your business processes, data governance requirements and IT infrastructure to deliver more consistency.

4. Data Quality Monitoring

If the quality of your data isn’t monitored regularly, you risk introducing errors that can flow through multiple systems and make your end results misleading or just inaccurate.

Choosing which attributes to monitor, and how to monitor them, is a potential pitfall area. The decision needs to be made carefully during the project’s design phase. If you have too much monitoring with overly detailed reports, you can overwhelm stakeholders resulting in important problems being overlooked. On the other hand, too little monitoring is undesirable as important observations are simply not being reported.

How To Maintain Data Quality

Data Discovery

Data discovery is an often overlooked and underestimated part of any data related project. People often make false assumptions about their data as most people view the data from their own perspective only. However, data discovery is a critical part of the design phase as it provides the input for the scope definition and project estimates.

Discovery involves a formal analysis of the data itself, finding relationships within and between datasets. One also has to consider all applications that use the data and need to be reconfigured or updated, especially in the case of data migrations.

As such, data discovery in larger organizations can be an activity involving various teams and often cross departmental boundaries.

Data Validation

Data validation is an important process in achieving sufficient data quality in the target system and goes hand in hand with data cleansing. The validation process flags any data that doesn't meet the validation criteria. Data that doesn't pass the validation step is marked for cleansing.

Data validation can be a manual or an automated process. A high degree of automation is, of course, preferable and indeed necessary for larger systems. Automated data validation can mean that the vast majority of data is repaired without any human intervention - meaning fewer errors and removing bottlenecks.

Nevertheless, some manual validation may still be necessary, especially for the most important data that cannot be allowed through without human confirmation or correction.

Data Validation and Cleansing Saves $800,000

Data Cleansing

During data discovery, you will often find that the data cannot be used in its current form and first needs to be cleansed. There are many different reasons for low data quality, ranging from simple ones (anything involving human data entry is likely to have various errors including typos, missing data, data misuse etc.) all the way to complex issues stemming from improper data handling practices and software bugs.

Data cleansing is the process of taking “dirty” data in its original location and cleaning it before it is used in any data transformation. The data cleansing process is often an integral part of the business logic with the data being cleaned in the transformation but left unchanged in the originating system.

Other approaches can also be used. For example, a separate, clean copy of the data can be created if the data needs to be reused or if cleansing is time-consuming and requires human interaction.

Recognising that there will always be bad data, and building effective, automated error handling into your processes, is crucial to maintaining a high level of data quality.

4 Data Quality Best Practices

Proactive data quality management is vital to establishing and maintaining data quality in your organization. Here are four key best practices you should follow to avoid the situation where bad data flows to multiple places across the business.

1. Fixing Data at Source

There are many situations where data propagates to multiple different systems from a single source application. Failure to enforce data quality in the source application results in low quality data propagating to those other systems and having a multiplying effect.

It is therefore well worth the effort of implementing as many validations as possible within source applications.

2. Data Validation on Input

Any process that submits data should include validation. Many downstream issues can be prevented by implementing basic validation checks such as:

- date validation (date format, or even whether a date is not in the future, for example)

- verifying values against lists (e.g. checking state names or country codes)

- checking for reasonable values of all required fields (e.g. do not allow “n/a” as person’s last name)

3. Data Quality Monitoring

Monitoring of data quality for every involved application is vital in order to prevent pollution of multiple applications with low quality data coming from a single source.

Monitoring often consists of data validation rules that are applied to each record as it is transformed into its destination format. It's important to balance the monitoring however to ensure that the business gets enough information to spot any problems, without generating too much output for people to be able to respond to.

4. Reporting

Reporting is a key part of maintaining data quality. Well executed reporting ensures that stakeholders get all the status information very quickly and are able to react in a short timeframe. This, in turn, shortens the time it takes to resolve any data quality issues, and improve any processes that are regularly resulting in bad quality data, so you can manage the bad data that's (inevitably) in your systems.

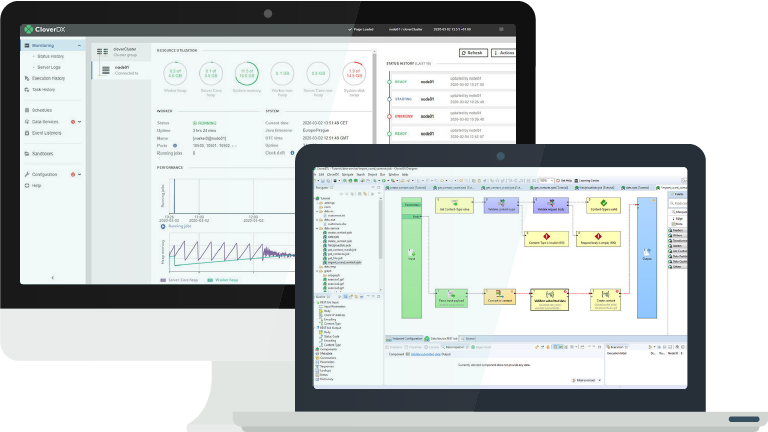

Data Quality With CloverDX

High-quality data is the cornerstone of any growing business. Ensure quality and accuracy in your data pipelines and facilitate effective decision-making with built-in data profiling, cleansing and validation features. With CloverDX, you can expect:

- Automated data validation and error handling integrated into data workflows.

- Proactive data quality management with built-in data profiling, cleansing, and validation features.

- Reduced manual effort through automated data validation processes.

- Enhanced data transparency and trackability, facilitating easier troubleshooting and maintenance.

- Comprehensive error reporting, accessible to non-technical users. Customizable and shareable validation rules.

- Scalable solutions that grow with business needs.

CloverDX has significantly improved data quality management for many businesses, including creating a self-learning address validation solution that repairs 90% of addresses instantly and saving one business $800,000 and increasing marketing effectiveness by 12%.

What the process looks like

Data quality management involves addressing challenges and objectives specific to your business. Our initial step is to understand your unique challenges and your goals for data quality. During this discovery phase, we will explore questions such as:

- What specific data quality challenges are you currently facing?

- How are these challenges impacting your business operations?

- What are your key objectives in improving data quality?

- Do you have any existing data validation or error handling processes?

- How do you currently manage data profiling, cleansing, and validation?

- What level of automation do you seek in your data quality processes?

- Who are the primary users of your data, and what are their needs?

- Are there any specific industry standards or compliance requirements you need to meet?

- How do you envision scalable solutions fitting into your long-term business goals?

By understanding your unique data challenges we can assist you in conceptualizing and developing your ideal data quality framework. This approach enables you to enhance data accuracy and integrity more efficiently, delivering greater value in less time.

Read more about how the features of CloverDX can help you with your data quality

Data Quality with CloverDX

We can help

Our demos are the best way to see how CloverDX works up close.

Your time is valuable, and we are serious about not wasting a moment of it. Here are three promises we make to everyone who signs up:

- Tailored to you. Every business is unique. Our experts will base the demo on your unique business use case, so you can visualize the direct impact our platform can have.

- More conversation than demonstration. Have a question? We can help. Quality of data, changing business needs and scalability are just a few of the challenges that can arise when improving data mangement. Whatever concerns or reservations you have, let us know.

- Zero obligation. We’ve all been there. You spend some time hearing about a product or service… and then comes the hard sell. Our team doesn’t ‘do’ pushy. We prefer honest, open communication that leaves you feeling informed and confident.

Get in touch for a personalized demo.

Read More about Data Quality